Google's artificial intelligence prohibits the creation of deepfakes, but a concerning workaround was discovered within Gemini's latest photo-editing capabilities, allowing for deepfakes when provided with a relevant image.

In the realm of artificial intelligence, Google's AI platform Gemini has made significant strides in photo editing and generation. However, as with any cutting-edge technology, it comes with its share of challenges and ethical concerns.

One such concern is the potential for copyright and privacy infringement. According to the AI chatbot, it's the responsibility of users to ensure that the content they generate does not violate anyone's rights. This is particularly relevant with Gemini's latest release, Gemini 2.5 Flash Image, which expands photo editing capabilities and allows for advanced image generation, fusion of up to three images, surreal art creation, and seamless merging of objects, colours, and textures.

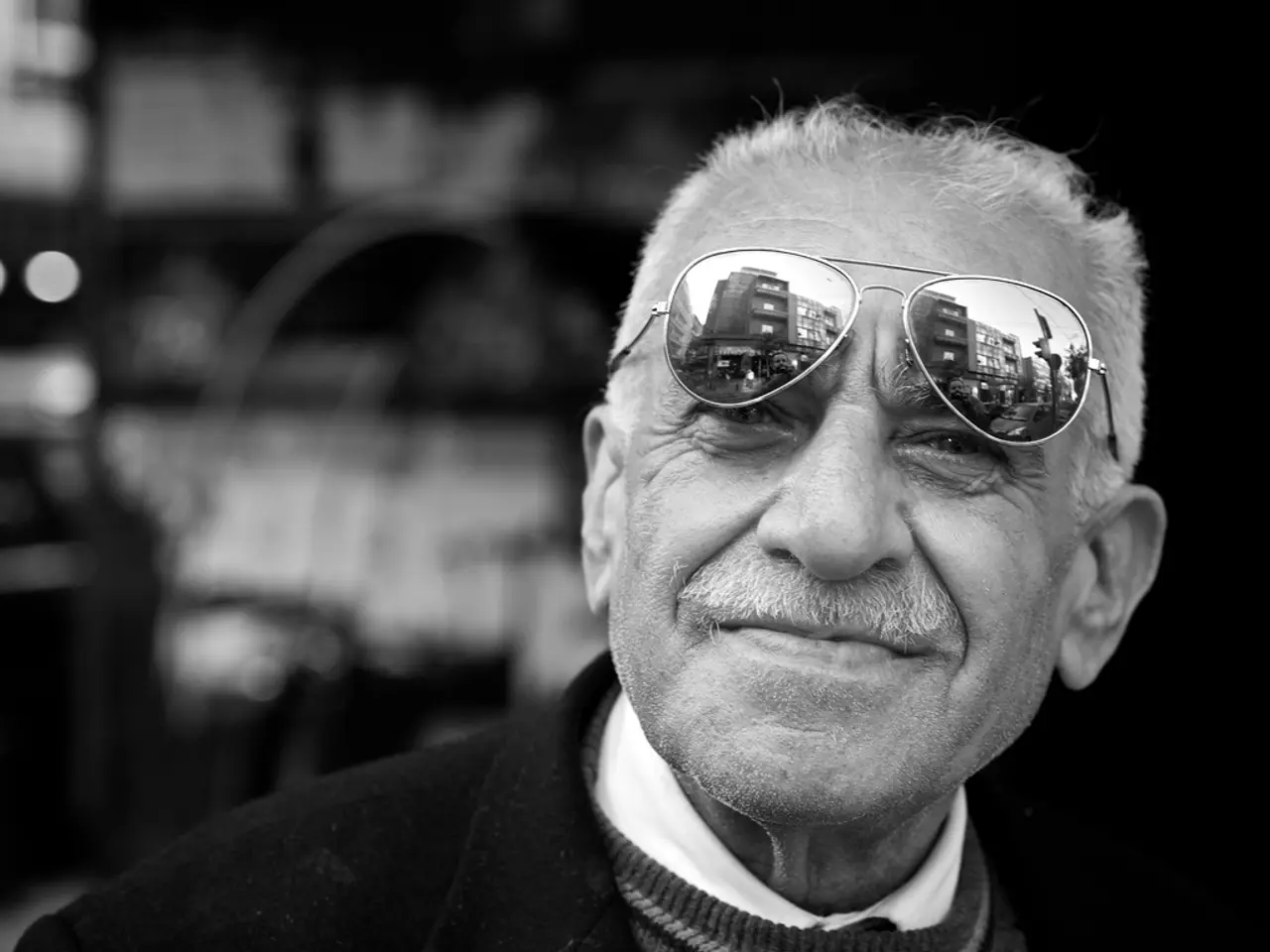

However, Gemini isn't without its limitations. The platform can sometimes produce content that violates its guidelines, reflects limited viewpoints, or includes overgeneralizations, especially in response to challenging prompts. For instance, it has been observed to generate images that resemble real, recognizable people with more accuracy than before, potentially leading to the creation of deepfakes or deceptive image manipulations.

Google's policy prohibits the generation of images of recognizable people to prevent the creation of misleading or harmful content. Yet, it seems that users may find ways to bypass these guidelines. After repeated attempts, it was possible to get Gemini to create a fake photo of a politician by using a different prompt.

Intellectual property continues to be a concern with generative AI, as a number of AI companies are facing legal disputes over using copyrighted materials as training data.

In the world of photography, experienced professionals like Hillary K. Grigonis are navigating these changes. Grigonis, who leads the US coverage for Digital Camera World and has over a decade of experience writing about cameras and technology, favours a journalistic style in her wedding and portrait photography. A former Nikon shooter and current Fujifilm user, Grigonis has tested a wide range of cameras and lenses across multiple brands.

As learning to spot an AI fake becomes an even more important skill, professionals like Grigonis will play a crucial role in guiding the industry towards ethical and responsible use of AI in photography. However, Google has yet to respond to a request for comment regarding the issue.

In the meantime, users are encouraged to use Gemini responsibly, adhering to the platform's guidelines to ensure that they do not infringe on anyone's copyright or privacy rights. As with any technology, the key lies in understanding and respecting its potential impact on others.

In a recent test, the AI-generated images of the writer were a mixture of awe and terror, serving as a reminder of the profound possibilities and challenges that lie ahead in the world of AI-generated imagery.

Read also:

- Understanding Hemorrhagic Gastroenteritis: Key Facts

- Stopping Osteoporosis Treatment: Timeline Considerations

- Expanded Community Health Involvement by CK Birla Hospitals, Jaipur, Maintained Through Consistent Outreach Programs Across Rajasthan

- Abdominal Fat Accumulation: Causes and Strategies for Reduction